Summary

Let’s take a look at the built in bridge network that you get on all Linux based docker hosts. Now this networks roughly equivalent to the default NAT network that you get with docker on Windows.

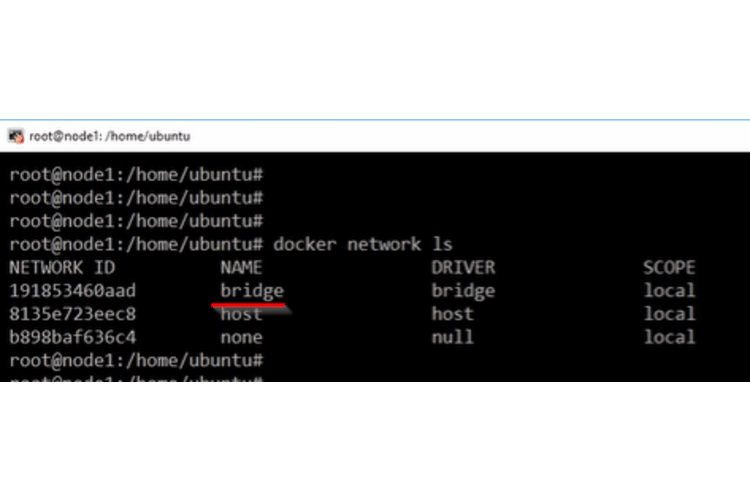

My setup : Fresh install on Linux

I’m logged in here to a freshly installed docker host and on my Linux based install. These are the three networks that I get by default.

Default networks

This one here called bridge using the bridge driver this is the one we’re interested in right now: the bridge network.

This one here called bridge using the bridge driver this is the one we’re interested in right now: the bridge network.

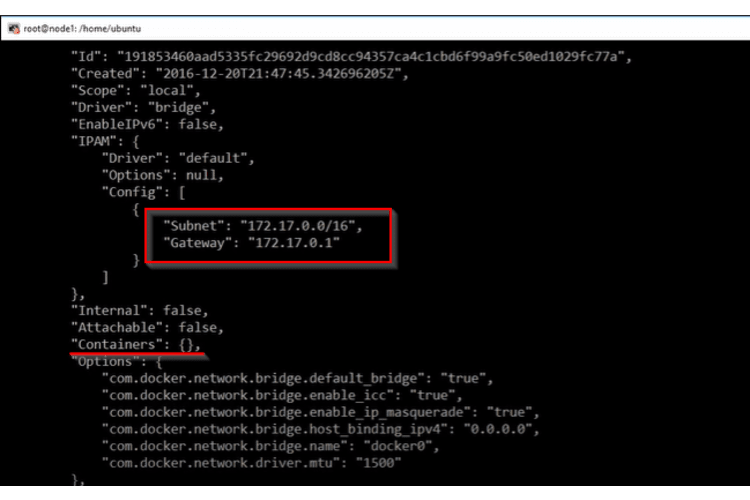

Inspect for details

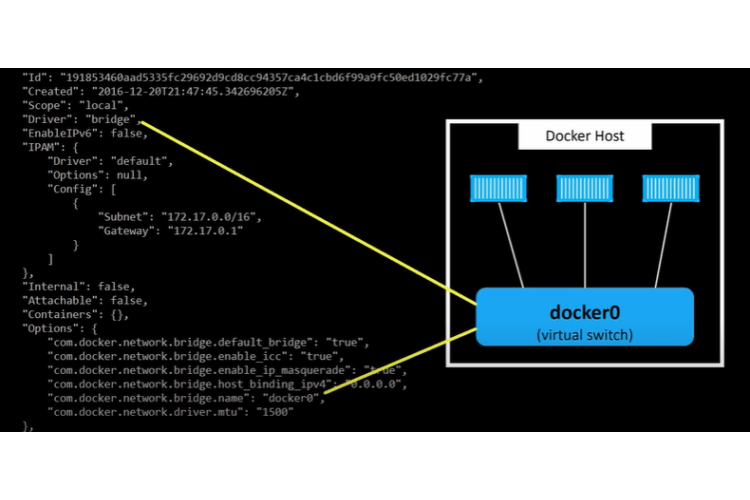

Now if we want to get more info on it we can fire off an inspect command here and we give it the name of the network and we get pretty much the same info as before just with a bunch more as well.

docker network inspect bridge

For one thing we can see the subnet and the Gateway here and we can see where it says containers we don’t have any.

Right now this network doesn’t have any containers attached to it okay but what’s underpinning all of this how does it all work well if we throw this up here we can see that on our docker host.

How the bridge network works?

We’ve got a virtual switch or bridge called docker0. This is what really makes up that network called bridge. All we have to do is plumb containers into it and like any layer to type switch any containers that get plugged into it are going to be able to

talk to each other now because this networks created by the bridge driver.

It’s confined to this here docker host that were logged onto that’s because the bridge driver is all about

It’s confined to this here docker host that were logged onto that’s because the bridge driver is all about single host networking so it creates isolated networks and switches that only exists within a single docker host.

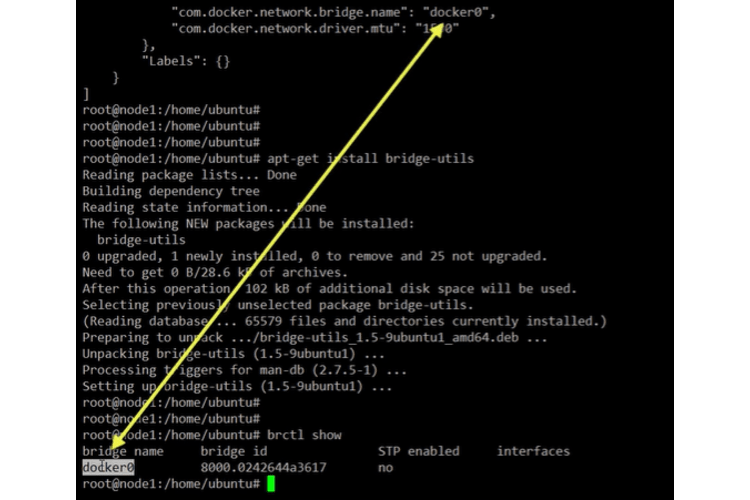

How to see the docker0 bridge

To actually see this dock a zero bridge we need to install the Linux bridge utilities package.

sudo apt-get install bridge-utils

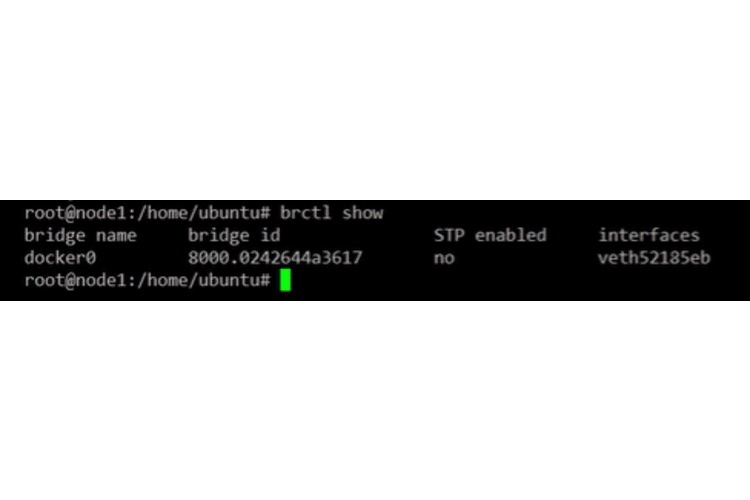

Now if we go brctl show there’s our docker0 virtual switch.

brctl show

In the native tooling parlance it’s called a bridge but a bridge in a switch are the same.

Over here we can see it’s got no interfaces attached to it that’s cause we’ve got no container.

Add a container to the network

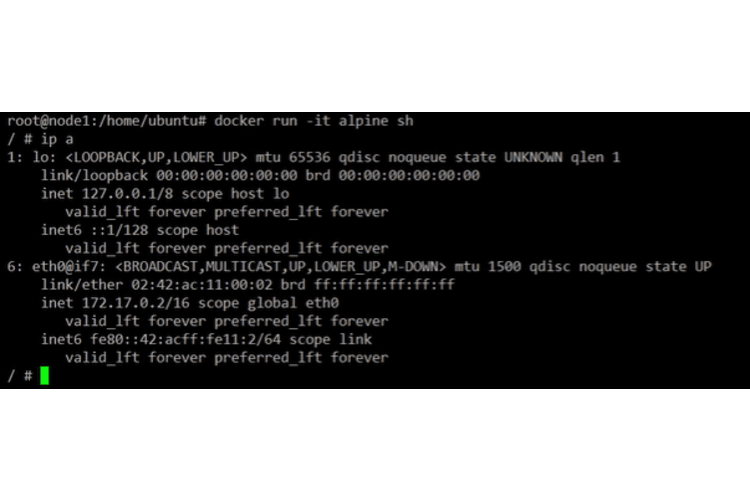

Let’s add one and just a simple docker run command :

docker run -it --rm alpine sh

This command is saying start as a new container base it off of the Alpine image and drop us into a shell.

Notice there right we’re not specifying which network to join so by default. If we don’t tell a container which network to join it’s gonna join that bridge network.

We’re in our container and this is our containers IP. We don’t need to do anything now so let’s just drop out of it here but keep it running.

We’re in our container and this is our containers IP. We don’t need to do anything now so let’s just drop out of it here but keep it running.

To drop out but keep it running, press Ctrl P+Q.

Check now that the docker0 bridged has a contaier attached to it

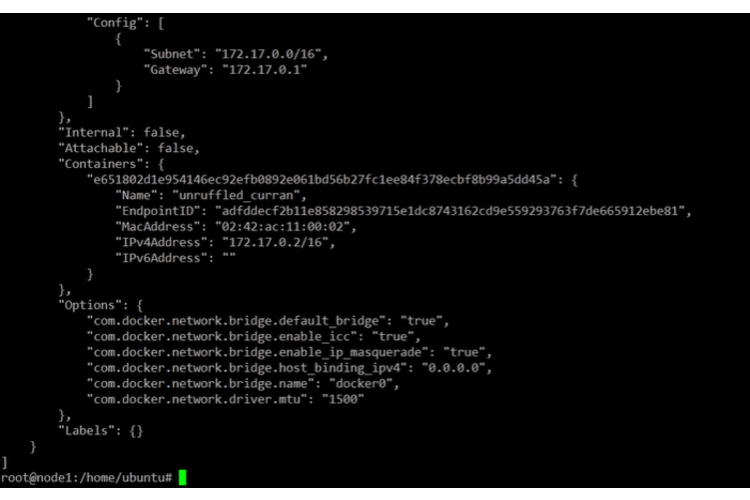

Let’s see if it did what we said it would do we’ll see for join that bridge network. Let’s run the inspect command again.

We’ve got a container attached to it and there’s its IP address too.

If we look at that docker0 virtual switch again, we see how it’s got an interface attached to it now that interface is plumbed into our container.

Conclusion

Let’s back up and recap. :)

We’re on a clean docker install on Linux all the networks we saw came as part of that default install so this bridge network was created for us.

It contains a single virtual switch called docker0.

We said this is the default Network and switch meaning if we create new containers and don’t specify a network for them to join they’re gonna get connected to that docker0 switch, be part of this bridge network and because the bridge network is created with the bridge driver it’s a single host network.

If you get into the error cannot TCP [connect](/blog/en/kubernetes/how-to-solve-kubernetes-can-connect-with-localhost-but-not-ip) from outside Virtual Machine, check our blog post on fixing the connectivity issue outside a virtual machine.